Companies in the Automotive Sector are Leveraging AI to Keep Drivers Safe on the Road

According to the World Health Organization, about 1.35 million people are killed in crashes on the world’s roads, and as many as 50 million others are seriously injured. In the United States, fatalities rose dramatically during the pandemic, leading to the largest six-month spike ever recorded, according to estimates from the U.S. Department of Transportation. Speeding, distraction, impaired driving, and not wearing a seatbelt were top causes.

Artificial intelligence is already being used to enhance driving safety: cell phone apps that monitor behavior behind the wheel and reward safe drivers with perks and connected vehicles that communicate with each other and with road infrastructure. But what lies ahead? Today we will look at some of the latest AI technologies used to keep drivers safe and the data annotation required to create them.

Intelligent Speed Assistance

Intelligent speed assistance (ISA) uses a speed sign-recognition video camera and/or GPS-linked speed limit data to advise drivers of the current speed limit and automatically limit the speed of the vehicle as needed. ISA systems do not automatically apply the brakes but simply limit engine power, preventing the vehicle from accelerating past the current speed limit unless overridden. The technology will be mandatory in all new vehicles in the European Union beginning in July but has yet to take hold in the United States.

ISA can use cameras alone to identify speed limits through traffic sign recognition. Cameras, however, have limited range, can be blinded by rain or snow, and perform poorly when needing to recognize conditional and variable speed limits, such as speed limits for specific weather conditions or vehicle types. Advanced digital map data solves these issues – containing verified speed limit data that ‘sees’ beyond camera range and performs in all conditions. The map data fuses with a vehicle’s ADAS function to prepare for changing speed limits. The result is not only greater vehicle safety but also increased driver comfort and energy efficiency.

Tracking Driver Behavior Behind the Wheel

The National Safety Council says at least nine people in the U.S. die, and another 100 are injured every day in crashes caused by distracted driving. In-vehicle technologies such as dashboard touchscreens have contributed to this enormous safety threat. But consumers are fond of these technologies, and they aren’t going away. However, there is a pantheon of other distractions that occur behind the wheel that vary greatly in form and severity. The most illegal use of cell phones or texting while driving tops the list. But there are so many other possibilities, such as “rubbernecking” at incidents outside the vehicle, interacting inside with children or pets, fiddling with the radio, eating while driving, etc.

Software algorithms can address these issues using image processing and input coming from sensors that synthesize in real-time selected information from the driver and interior of the vehicle. Engineers are developing these algorithms to accurately predict all possible human behavior, even though it is sometimes unpredictable and irrational.

These algorithms are critical because they serve two main purposes: to provide output to the driver in the form of alerts or other information; and to specify the way the system should react to control the vehicle (brake, steer, or other safety-related navigation commands).

Using AI and visual AI (cameras inside a sensor, for example), it is possible to ascertain quickly what’s going on inside the cabin and to continually build use cases so we can understand when something may interfere with the driving process and create new ways to alert for that

What Types of Data Annotation are Required to Create Such Technologies?

When we look at a product like intelligent speed assistance, the cameras that allow the technology to see the road must be able to identify other vehicles on the road and the distance from one car to another, this would require lots of video labeling and image annotation with methods ranging from simple 2D boxes which involve simply drawing a bounding box around other cars in the frame to semantic segmentation which is a more accurate annotation type in image annotation, and it is also a time-consuming one. The annotator needs to differentiate all the content in the image.

The driver tracking tool would also require labeling to identify objects like cell phones, food, and many others. More advanced types of data annotation such as scene classification and object tracking would also be necessary.

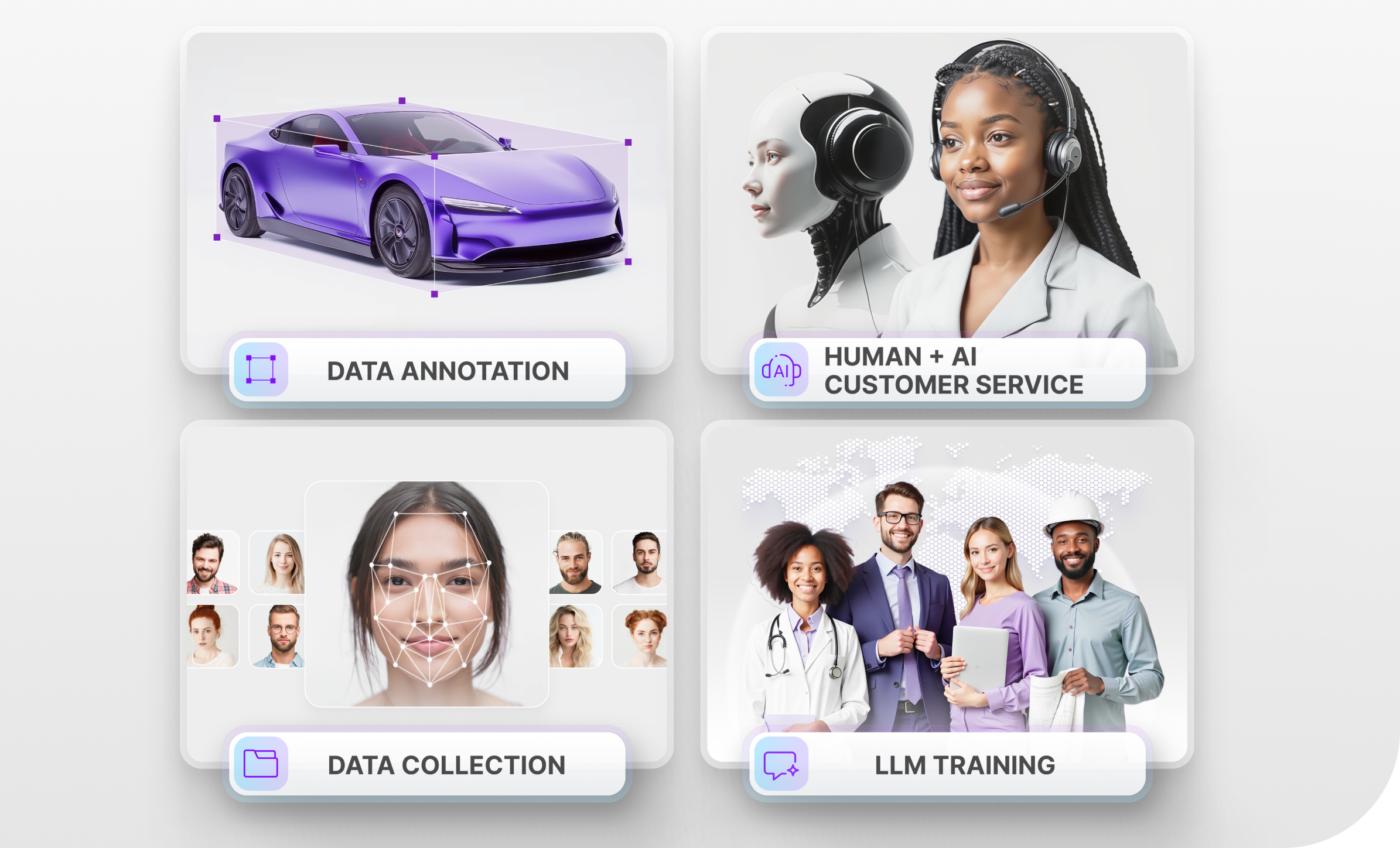

Trust Mindy Support With All of Your Data Annotation Needs

Mindy Support is a global company for data annotation and business process outsourcing, trusted by several Fortune 500 and GAFAM companies, as well as innovative startups. With nine years of experience under our belt and offices and representatives in Cyprus, Poland, Romania, The Netherlands, India, and Ukraine, Mindy Support’s team now stands strong with 2000+ professionals helping companies with their most advanced data annotation challenges.