Using Data Annotation to Solve Some of the Challenges of Self-Driving Vehicles

Recently, the masterminds behind Toyota’s self-driving cars gave an interview to IEEE Spectrum. They discussed some of the challenges that AI has yet to overcome which are preventing autonomous vehicles from going mainstream. While some of the issues they mention still have many researchers guessing, many can be solved by teaching the machine learning algorithms to recognize everything in its surroundings and make the correct decisions based on that recognition.

Solving the Perception Problem

Currently, autonomous vehicles use cameras that provide the semantics, LiDAR to establish the proximity of objects in their field, and radar to measure the velocities of other vehicles. The problem here is that how an AI interprets that data is different from how a human, who often looks at the world from different positions, might interpret it. For example, an AI needs to be able to figure out which range estimate a pixel belongs to. In other words, the car on the road needs to be able to differentiate between a person painted on the side of another car and an actual physical person crossing the road. According to Wolfram Burgard, Vice President of Automated Driving Technology for TRI, this problem could be solved by providing more data.

In order to obtain the necessary volume of data to feed into the system and train the ML algorithms, researchers need to have thousands and thousands of images annotated. This could range from simple 2D bounding boxes all the way to more complex annotation methods, such as semantic segmentation. Even though autonomous vehicle companies like Waymo, Argo.ai, Tesla, and many others, have already amassed a ton of such annotated data, it is still not proving to be enough to allow AIs to overcome these challenges.

Substituting Pattern recognition for Reasoning

As it stands now, AI systems cannot correctly look at a situation on the road and come to a reasonable conclusion as to what will happen in the same way that a human can. For example, if a human driver sees a parent pushing a stroller, they can understand that this person is not likely to cross the street at a red light or otherwise jaywalk. However, if they see a couple of teenagers with skateboards, a human driver will also understand that there is a high probability that they may try to cross the street even when they are not supposed. Currently, an AI is unable to make this distinction.

There is real disagreement in the research community as to whether or not more data will help create an AI product capable of thinking. There are companies, like Nvidia, who are trying to use pattern matching as a substitute for reasoning. So, if we were to return to our example: they would feed annotated images of teenagers, and other people that are more likely to jaywalk, into the ML algorithms so it could then recognize them. Since there are many possible groups of people who require this distinction, they break each segment down into blocks. For example, teenagers are one block, young adults are another, and so on. The problem is that the car needs to be able to recognize each of these different categories and make the appropriate decisions.

Wolfram Burgard asserts that such pattern matching methods are simply not a substitute for the deeper intelligence needed. A car could be trained to identify teenagers and other people as likely jaywalkers if the patterns that they were trained with exist. However, this will not always be the case. Therefore, the car needs to be able to actually think like a human and know how to make decisions about things it hasn’t been trained to deal with. Either way, an incredible amount of data still needs to be accurately annotated and fed into the ML before results can be seen.

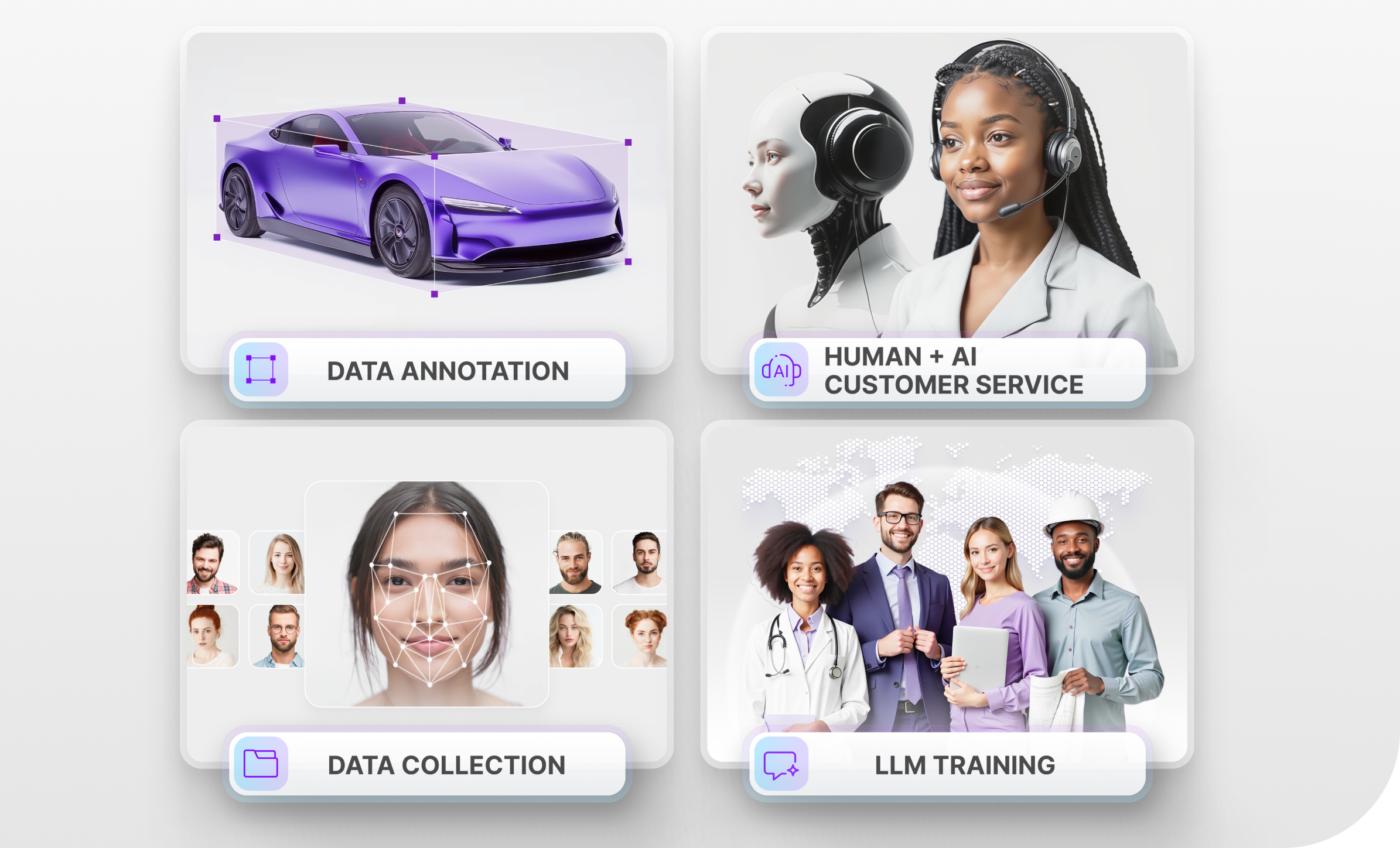

Teams Available to Start Your Data Annotation Today

We understand that providing Ground Truth quality annotation for self-driving cars is a very time-consuming process, but it is essential to the overall success of the project. Get the best results by training and validating your algorithms with accurate annotations made by Mindy Support. Our teams are available to start your data annotation today. We have a comprehensive QA process in place to make sure that everything is annotated correctly the first time around ensuring the accuracy of the data and keeping your project on schedule. Our expertise is recognised by several Fortune 500 and GAFAM companies.