Precise Data Annotation Paves the Way for Computer Vision in AI Drones

Over the last two to three years, artificial intelligence has been a game changer for the drone industry. Technologies like computer vision allow drones to detect and track objects of interest in real time, which opens the door for new use cases that were previously unimaginable. It can help improve emergency response, animal conservation, perimeter security, site inspections, and much more. In this article, we will delve deeper into computer vision and explore the data annotation that is needed to accurately AI systems.

What is Computer Vision?

Computer vision is a part of AI that trains machine learning algorithms to identify, interpret and track objects in an image or video. Basically, the technology is trained to identify patterns, which is done by feeding computer models thousands or even millions of images with annotated data. This allows the algorithms to establish a profile for each object it will need to identify in the real world.

Thanks to advances in machine learning and neural networks, computer vision has made great leaps in recent years and can often surpass the human eye in detecting and labeling certain objects. One of the driving factors behind this growth is the amount of data we generate that can be used to train computer vision models more accurately.

What are the Applications of Computer Vision in Drones?

With the help of computer vision drones, tech companies are bringing dynamic changes into the world and making the lives of their clients across industries a lot easier. Here are a few popular applications of AI drones:

- Agriculture – Drones can fly over fields and take high-resolution photos, which are analyzed to detect pests, identify plant diseases and even estimate yield. The precise information the drones gather allows farmers to spot problems before they imperil a harvest.

- Construction – Companies in the construction industry use drones to monitor the pace of construction, identify potential safety hazards, track supplies and materials on the site, and a lot more use cases.

- Smart Cities – A smart city is a technologically modern urban area that uses different types of electronic methods and sensors to collect specific data. Drones can be used to help recognize and relieve traffic congestion. Images of traffic scenes can be captured by an AI drone on route-planning technology, which will be further processed by convolutional neural networks, which will provide insights on how to relieve the congestion.

What Types of Data Annotation are Necessary to Create Computer Vision Drones?

The types of data annotation necessary for computer vision projects will depend on the capabilities the drone will need to have. For example, let’s take a look at the use of computer-vision drones in agriculture. As the drone flies over the farmland, it needs to accurately identify various types of crops, weeds, problematic growing areas, signs of drought, and many other fine-grained details. This means that semantic segmentation will be necessary since it provides the AI system with insights about its environment and with pixel accuracy.

If we take a look at an AI drone that is used at construction sites, all it needs to do is identify the various types of equipment on the job site for inventory purposes. In this situation, simple labeling or bounding boxes will be enough to train the AI. As the name suggests, data annotators would need to draw rectangular boxes around the needed objects, which will make it easier for the drone to identify the desired objects and associate them with what they were originally taught.

Finally, if we take a look at the example of alleviating traffic congestion, both semantic segregation and bounding boxes may still be necessary, but the training data will most likely consist of videos instead of static images. Video annotation is more time-consuming because a video might be shot in 30 frames per second (fps) or even 60 fps if it’s a high-quality video. Now, every frame of that video will need to be annotated with bounding boxes, semantic segmentation, or any other type of annotation method. Even if a video is only 30 seconds long, but it was shot at 60 fps, this means that 1,800 frames will need to be annotated. Once again, since this type of work can really drain employees’ time, companies choose to outsource this type of work to a data annotation company.

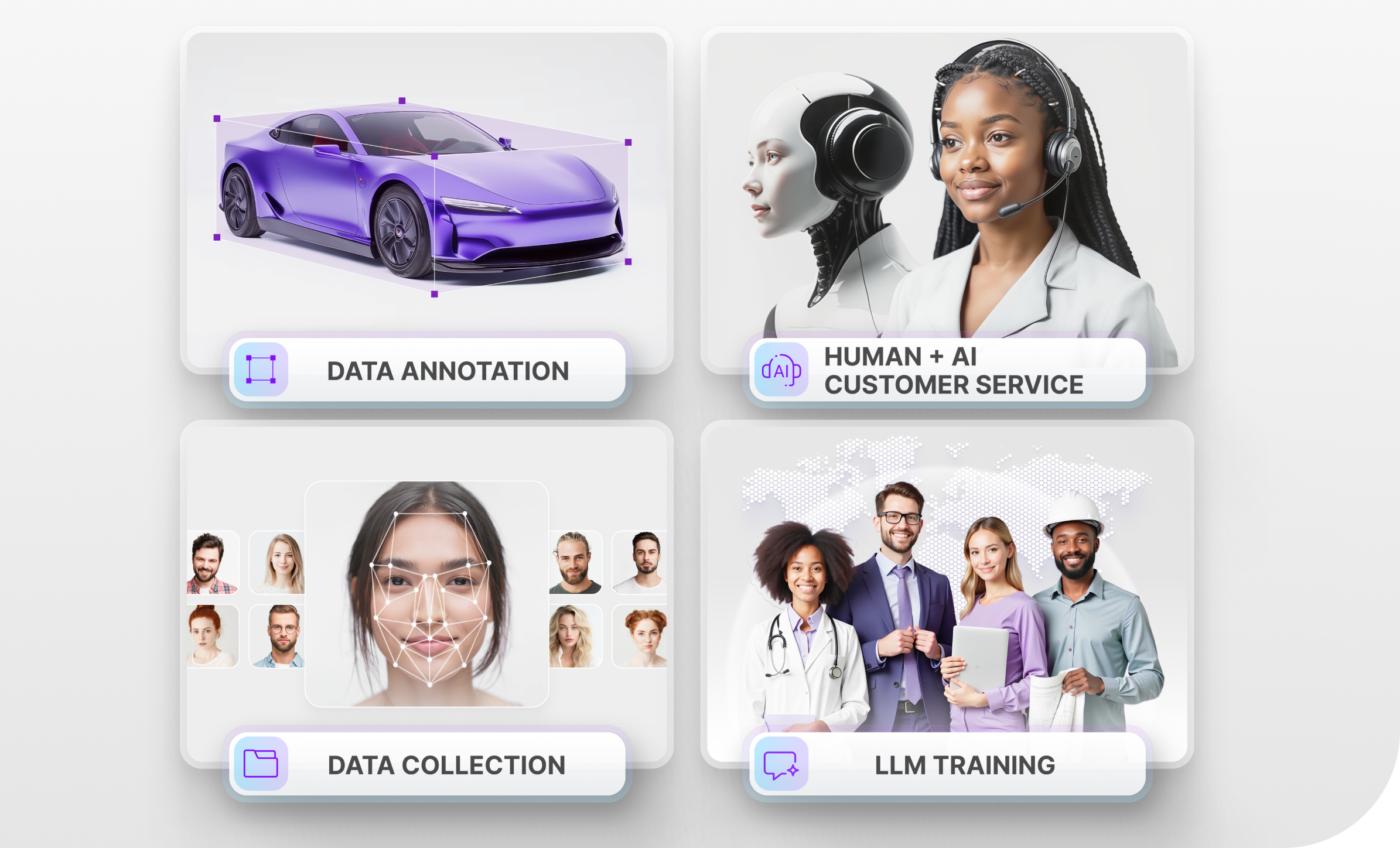

Trust Mindy Support With All of Your Data Annotation Needs

Mindy Support is a global company for data annotation and business process outsourcing, trusted by Fortune 500 and GAFAM companies, as well as innovative startups. With nine years of experience under our belt and offices and representatives in Cyprus, Poland, Romania, The Netherlands, India, UAE and Ukraine, Mindy Support’s team now stands strong with 2000+ professionals helping companies with their most advanced data annotation challenges.