Human Driving Will Be Outlawed By 2050

As far-fetched as it may sound, we could be witnessing the end of human driving. A new report from IDTechEx predicts that cars could fully take over in 2050. This is a very ambitious claim given that today, most new cars offer only about Level 1 automation, which is still far away from Level 5, which is full automation. Nevertheless, the report forecasts that vast improvements to autonomous vehicle technologies such as radar, lidar, HD cameras, and software have already propelled robotaxis to the cusp of market-readiness. Also, the report found that autonomous vehicles will become a massively disruptive technology which will grow rapidly at a rate of up to 47% to transform the auto market over the next two decades.

Since the field of autonomous vehicles is growing so quickly, let’s take a look at the state of self-driving cars today and some of the challenges companies need to overcome to reach Level 5 automation.

Where are We Today?

As we mentioned earlier, a lot of the cars being produced today offer only Level 1 automation which has features like lane-keep assist and adaptive cruise control. More advanced cars such as Teslas, which have the Autopilot system, and GM’s Super Cruise offer Level 2 Automation. This means that the cars can manage speed and steering on their own, but require the driver to pay constant attention in case they suddenly need to seize control.

Having said this, we are already seeing some car manufacturers experimenting with Level 3, such as Honda, which released the Honda Legend Hybrid EX earlier this year. The most interesting Level 3 function is the Traffic Jam Pilot, which allows the car to operate itself during low-speed traffic jams on the highway. The driver cedes control to the vehicle, enabling them to, say, watch a movie on the infotainment screen. But the driver must be ready to intervene if called upon.

While there are some advancements being made in Level 3 automation, what we are seeing is that a lot of manufacturers are skipping Level 3 and focusing on the more exciting Level 4, where cars can operate with full autonomy within a bounded area. This is understandable since revolutionizing the transport of people and goods is a bit more lucrative than offering a fancy feature on a luxury car. Also, we need to understand that whenever a company implements a new autonomous driving feature, they need approval from many different regulatory agencies, which is a real hassle. Therefore, simply adding a new feature may not be worth all of the time that will need to be spent on getting the necessary approvals.

What’s Preventing Companies From Reaching Level 4 and 5 Automation?

With all of the talk about self-driving cars, we have to ask the question: why aren’t we seeing fully autonomous vehicles on the road yet? Well, there are some major obstacles that need to be overcome:

- Road Conditions – Road conditions are very unpredictable and will vary from one location to another. For example, sometimes there could be clear lane markings, but on other stretches of road the paint could be faded, making it difficult for the AI system to understand. Also, road infrastructure, regulations, and driving customs vary from country to country, even city to city, and are overseen by a multiplicity of bodies. So it’s not clear which institutions have the power and reach to regulate and standardize the driving environment, if they even exist.

- Weather Conditions – Similar to human drivers, self-driving vehicles can have trouble “seeing” in inclement weather such as rain or fog. The car’s sensors can be blocked by snow, ice, or torrential downpours, and their ability to “read” road signs and markings can be impaired. The vehicle relies on a technology called LiDAR and radar for visibility and navigation, but each has its shortcomings. LiDAR works by bouncing laser beams off surrounding objects and can give a high-resolution 3D picture on a clear day, but it cannot see in fog, dust, rain or snow.

- LiDAR Interference – Lidar interference has been shown to happen if the LiDARs’ optical axes intersect and if a hard or volumetrically scattering target is present where they intersect. If multiple self-driving vehicles are at a busy intersection, the lidars from each vehicle would have the potential to interfere with the others. It is possible that incorrect data could be returned because of the presence of other lidar sources, possibly resulting in collisions.

Highly Accurate Data Annotation Could Help Us Overcome These Obstacles

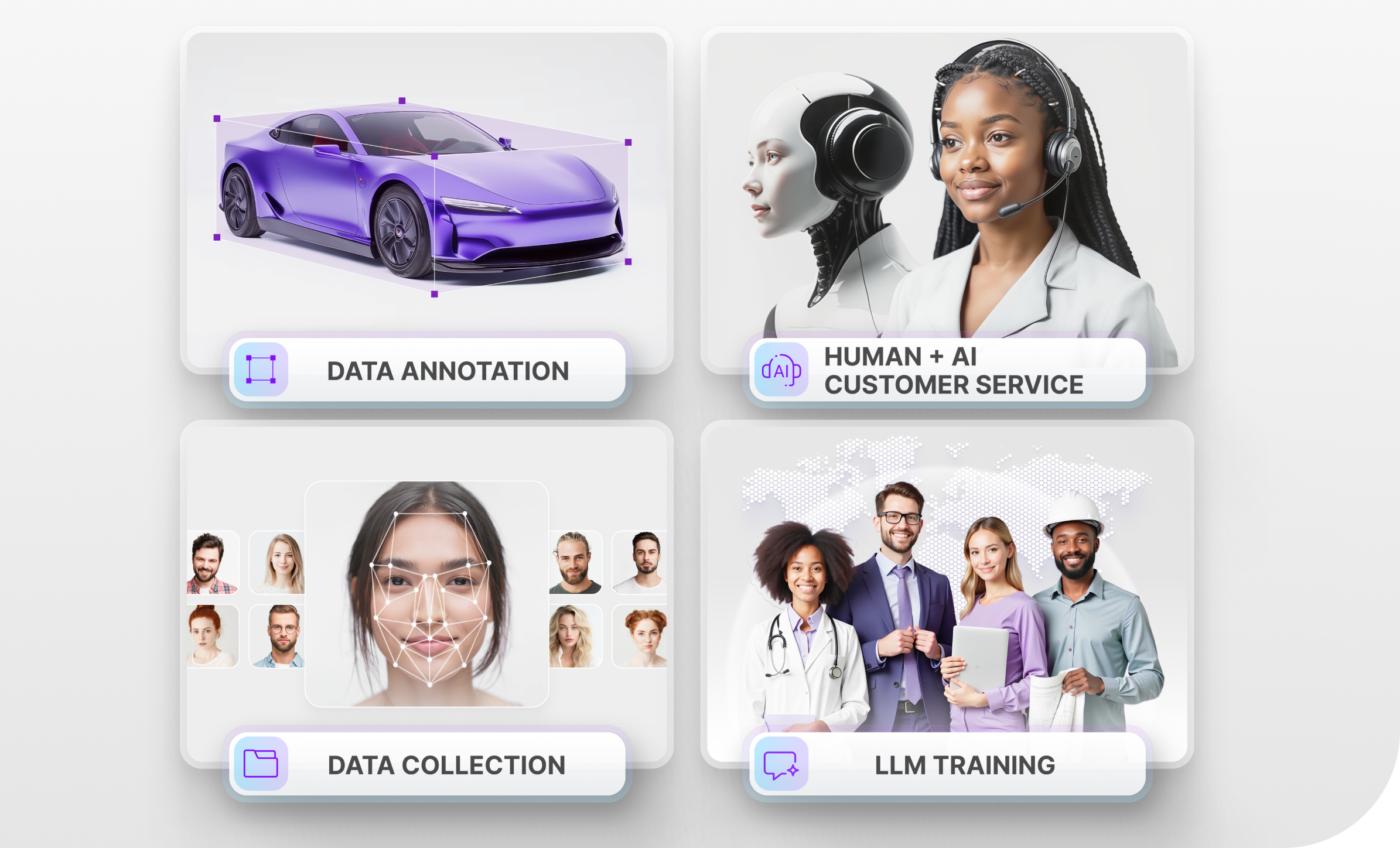

Training neural nets is something like holding a child’s hand when crossing the road and teaching them to learn through constant experience, replication, and patience. This is where the data annotation process can play a key role. Human data annotators prepare the raw datasets with techniques such as 2D/3D boxes, lines and splines, semantic segmentation and many others to help the car “understand” all of the possible scenarios it may encounter on the road. Since data annotation is such a time-consuming process, a lot of companies choose to outsource such work to companies like Mindy Support.

Trust Mindy Support With All of Your Data Annotation Needs

Regardless of the volume of data you need to be annotated or the complexity of your project, Mindy Support will be able to assemble a team for you to actualize your project and meet deadlines. We are the largest data annotation company in Eastern Europe with more than 2,000 employees in six locations all over Ukraine and in other geographies globally. Our size and location allow us to source and recruit the needed number of candidates within a short time frame and we can scale your team without sacrificing the quality of the work provided. Contact us today to learn more about how we can help you.