How AI Could Make Policing Fairer

Companies across industries are implementing AI technologies to streamline processes and eliminate a lot of manual work. However, the use of AI in policing has raised some ethical questions and a lot of people are afraid that this gives the police too much power. Still, we also need to recognize that, like all technologies, AI is a double-edged sword which means that we must implement the right safeguards to make sure it works as intended. Let’s take a closer look at AI technology and how it could make policing fairer.

Facial Recognition

Recent protests over police brutality have brought AI technology like facial recognition into the national spotlight. The fear is that police and federal agencies will use all of the pictures the protesters posted online to identify them in real life. This prompted companies like Amazon to restrict police access to their facial recognition product, called Rekognition and IBM announced that it is topping facial recognition research and development altogether due to all of the civil rights concerns. What’s even more striking is the bias in the facial recognition systems. A recent study by MIT tested three technologies that identify peoples’ gender and it turned out that the error rate for dark-skinned women was at 34% which 49 times higher than for white men.

However, we need to keep in mind that the facial recognition system is only as accurate as the data used to train it. Basically, human data annotators conduct landmark annotation, which is putting small points along with the facial features in each image. All of this data is then fed into the systems so that the computers can learn to recognize all of the facial features in real life. If the training data was not properly annotated, the system could produce false positives. As the technology matures, the recognition will become more accurate, but mistakes will inevitably happen. If we can achieve an accuracy rate as close to 100% as possible, this could make policing fairer since it will be able to prevent the wrong people from getting arrested.

Natural Language Processing

When we hear the term natural language processing we tend to think of personal assistants like Alexa and Siri, but the same technology could be used to make policing fairer. Nowadays, police officers wear body cameras that record all of their interactions with people, and the language they use could be analyzed to determine whether or not they treat one group of people better than another. Also, when police officers receive information from dispatchers in terms of where they need to go, this information should also be analyzed for potential biases. For example, let’s say the police officer receives the following information: Asian female, 5’4” in a parking lot. How does this information impact the actions taken by the police officer? Perhaps there is a need to anonymize information about suspects.

Machine learning can help police departments analyze all of this information and take appropriate actions. Based on the results and implement more training or create stricter and clever rules in terms of how police officers should interact with people.

Using AI to Predict Future Crimes

Machine learning algorithms are being used to analyze data about neighborhoods where serious crimes are more likely to occur. Cities like Chicago are taking this one step further to predict who will commit the crimes. Civil rights groups like the ACLU and many others have all raised concerns about such technology since the data used to train the systems are based on historical police data. For example, if the algorithm only relies on historical arrests data, it will create a feedback loop where the algorithm makes decisions that reflect and reinforce stereotypes about neighborhoods perceived as “bad” or “good” instead of the number of actual crimes being reported. However, once again we return to the problem of accurate training data. Any data the system relies on for training must be accurately annotated to make sure that it encompasses a wide variety of information and the algorithm understands and calculates everything correctly.

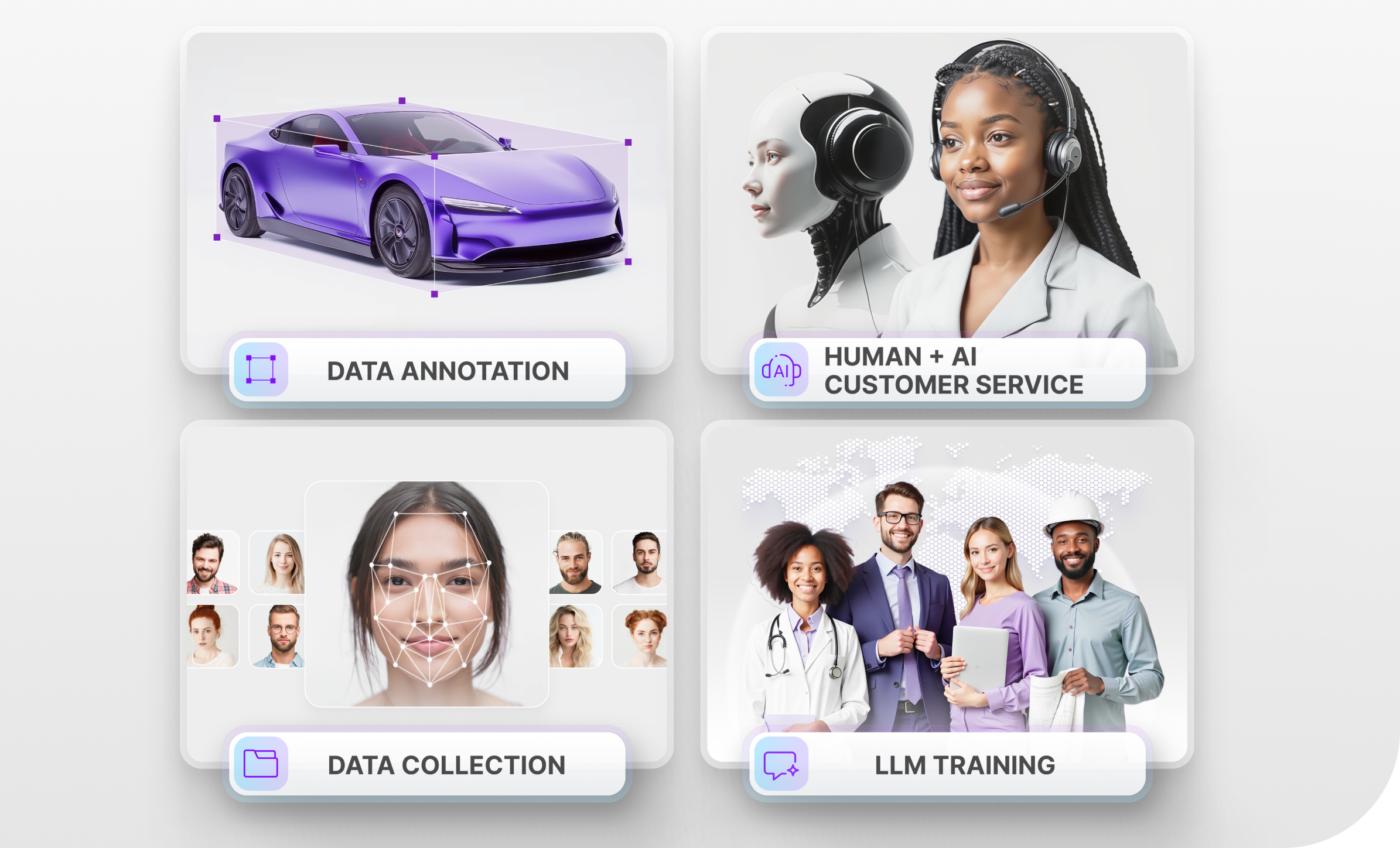

Mindy Support is Helping to Create More Accurate AI Systems

Like we saw in the examples above, the accuracy of AI systems heavily depends on high quality annotated data. If data annotation is taking up too much of your time, consider outsourcing this work to Mindy Support. Regardless of the size of your project, we will be able to quickly find the necessary amount of people to perform the annotation work. If you ater need to scale the project, we will be able to do that as well without compromising on quality. We have an extensive track record of actualizing data annotation project and getting the best results for our clients.